AI TOV Assistant for Art Basel

How I channelled ungoverned AI use in Art Basel's Content Marketing Team without adding friction.

AI Governance & Enablement

The Problem — Fragmented AI adoption

As pressure to produce more content increased at Art Basel, with shorter lead times and no added headcount, teams began using AI informally. A company program existed, but awareness was low.

Adoption in the Content Marketing team grew unevenly. There were no shared standards, no oversight, and no mechanism for collective learning.

The risks were clear: fragmented brand tone across channels, inconsistent output quality, privacy exposure from ungoverned tools, and duplicated effort as individuals solved the same problems in isolation.

The core challenge was behavioral: how to bring structure to an organic practice without adding friction for already stretched teams.

The Design Decisions — Enable and support

The guiding principle was to enable and support, not control. A mandatory approval workflow or central enforcement model would have failed. Governance had to earn adoption by making the team's work better, not harder.

Operating constraints:

The team owned the brand Tone of Voice, documented in a long, static PDF. Most content was written by the Copywriter, but workload forced others to step in.

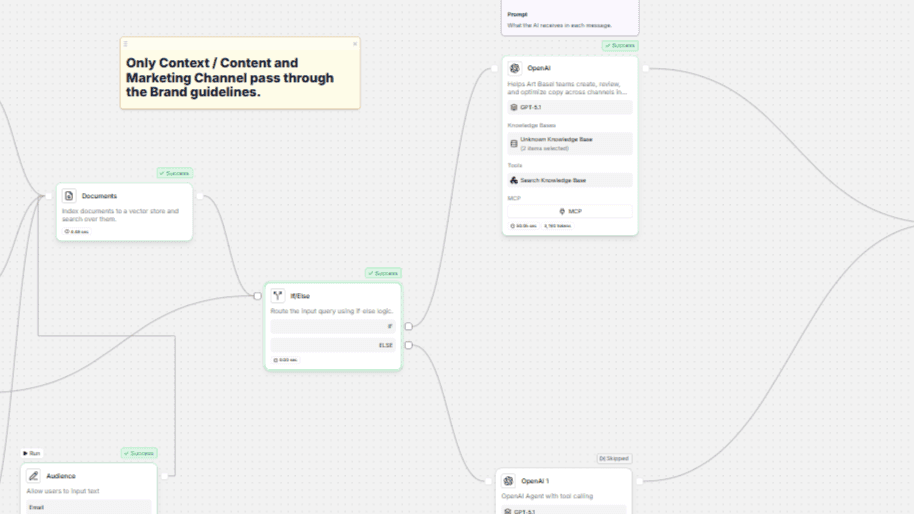

I partnered with the Head of AI & Data to build an AI TOV Assistant for the team. With capacity already stretched, any added process risked feeling like friction. Adoption had to be voluntary, so the tool needed to be clearly more useful than existing ways of working.

01 — Don't block AI use

Rather than discouraging the informal AI use that was already happening, the goal was to redirect it through a safer, better tool. The AI TOV Assistant was trained on Art Basel's official Tone of Voice and brand guidelines, offered better privacy than generic LLMs, and was designed to align naturally with existing content standards. The decision was deliberate: make the governed path the easiest path.

02 — Define what "good" looks like

Rather than creating a central review layer, I issued explicit evaluation criteria for AI-generated content: how to assess TOV alignment, what makes content clear and channel-appropriate, when human editing is required, and how to avoid accepting AI output uncritically. The goal was to distribute quality judgment and give every team member the tools to assess output independently, consistently, and without bottlenecking through a central approver.

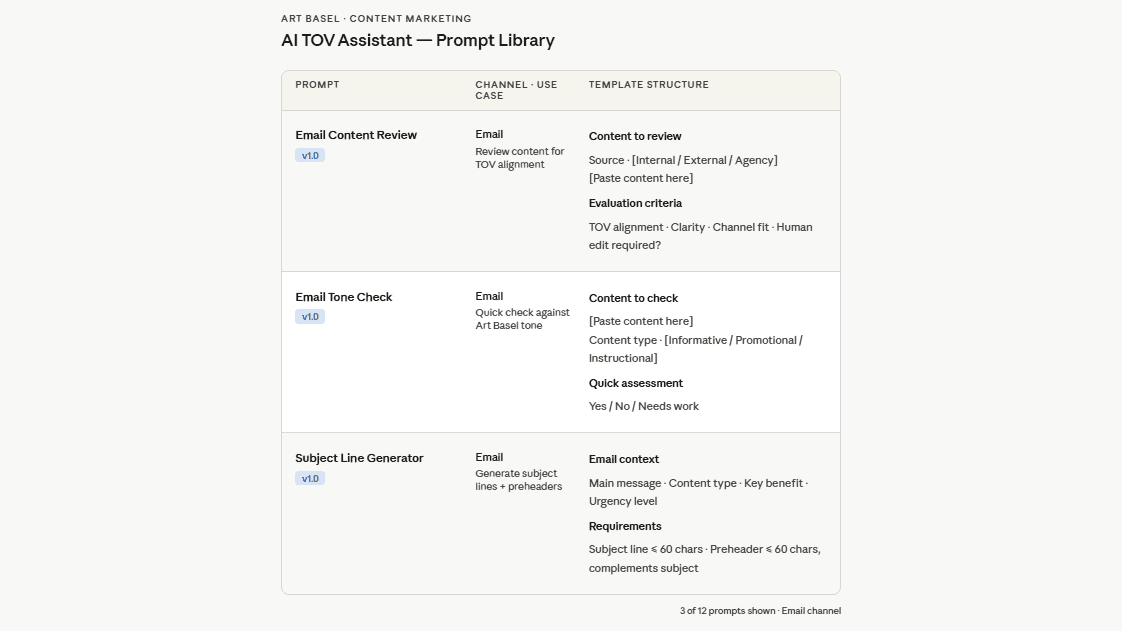

03 — Build prompt libraries collaboratively

We developed prompt libraries for the team. Each library reflected real use cases from our different channels (email, web, and app), which meant team members stayed in the position of domain experts rather than passive users of a central system. This approach also reduced fragmentation: instead of each person improvising prompts independently, we converged on shared starting points that reflected our actual work. The team copywriter remained the guardian of the editorial voice.

04 — Build a feedback loop

The governance model included regular reviews of AI usage, refinement of prompts and evaluation criteria, and structured identification of what worked, what didn't, and where AI added real value. This was the mechanism for turning individual experimentation into organisational learning, and preventing the knowledge fragmentation that had characterised the ungoverned phase.

Results — Adoption by choice. Not by mandate.

We went from no governance framework to a deployed, adopted system

Mutually reinforcing enablement components designed and deployed

Reduced secrecy and fragmentation around AI use across the team

Success was measured through behavioral signals rather than hard metrics, which was the right call at this stage of adoption. Team members voluntarily chose the AI TOV Assistant over personal LLM accounts. Conversations about AI use became more open. Tone and quality became more consistent across channels.

The outcome was the shift from invisible, ungoverned experimentation to visible, shared practice.

The Bigger Insight

"Good AI governance doesn't police output. It raises the quality bar people apply to their own work."

What I'd Do Differently

The program was scoped to the Content Marketing team, but the adoption problem was wider. The Digital Marketing team, responsible for social media and paid search content, faced the same ungoverned AI use and would have benefited from the same framework. The conversation started, but never progressed. Not for lack of interest, but for lack of capacity: the team simply didn't have the bandwidth to take it on.

This surfaces a tension that sits at the heart of AI adoption. Before AI saves time, it costs it. Documenting workflows, selecting tools, testing, and iterating to a usable state all require an upfront investment that stretched teams struggle to make.

The enablement question isn't just "how do we build the program?" It's "how do we create the conditions for teams to engage with it in the first place?"